Gesture-Based Performance System2018

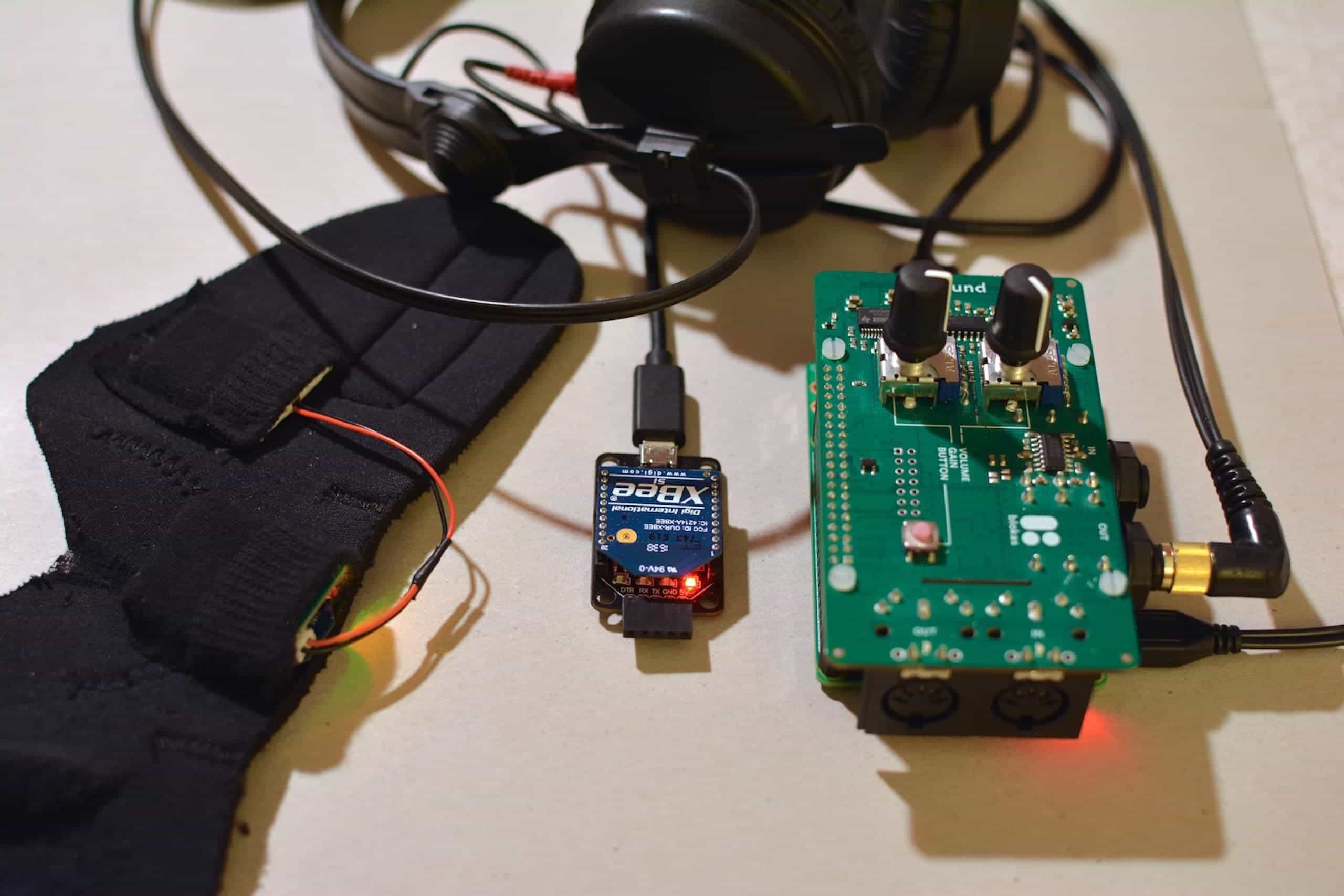

The “Gesture-based Performance System” (GePS) is a digital musical instrument designed to enable intuitive gestural control of electronic sound modules. Through a small sensor unit worn on the wrist, the DMI catches gestural data of hand and arm and uses it to control a sampler & synthesis engine.

The motivation behind the project was an apparent disconnect between performer movement and instrument sound that many electronic instruments have. A player's bodily involvement is rarely a concern of the instrument designer and therefore risks being ignored by the performer as well. This, in our opinion, can make performers of electronic music seem detached from their music and thereby harm performances. Exploring one possible solution, we designed our DMI's control mappings with intuitive (for the performer) and clear (for the audience) movement-sound relationships in mind. We achieved this through the use of transparent control metaphors: in order to benefit from the listener's and performer's natural understanding of physical processes around them, we used gestures with strong physical associations, which were then mapped to sound modules specifically designed to represent these associations sonically. The sound aesthetics were oriented towards the sonic language of acousmatic music, as it lends itself particularly well to associations to physical processes. When playing the instrument, any gesture performed has a corresponding sound and every sound has a corresponding gesture. As a result, the performers bodily involvement is determined by the DMI's design and seen as necessary and justified from the audience's perspective.